In a move set to redefine the front lines of digital defense, Google Cloud has announced a groundbreaking vision for cybersecurity. It’s not just another tool or platform. It’s a fundamental paradigm shift, an answer to an industry at its breaking point. Google is introducing a true “AI ally,” an agentic security partner designed to work alongside human teams, empowering them to fight back against the escalating tide of digital threats.

The announcement, a centerpiece of the Google Cloud Security Summit 2025, details a future where artificial intelligence doesn’t just assist security operations but actively participates in them. This new approach, dubbed “agentic security,” aims to transform how organizations detect, investigate, and respond to cyberattacks, moving defenders from a state of reactive exhaustion to one of proactive command. The core idea? To embed a world-class security analyst and engineer, powered by Google’s advanced AI, into the very fabric of a company’s defense system.

The Breaking Point: Why Cybersecurity Needed a Revolution

Let’s be honest, the world of cybersecurity has been a pressure cooker for years. Security teams, the unsung heroes of the digital age, are overwhelmed. They are outgunned and, frankly, burning out.

Here’s the problem in a nutshell: a deluge of data. Modern enterprises use dozens of security tools, each generating its own stream of alerts, logs, and warnings. Human analysts are expected to manually sift through this noise, trying to connect the dots between a suspicious login in one system and a strange data movement in another. It’s a monumental, often impossible, task. This phenomenon, often called “tool fatigue,” leaves even the most skilled professionals exhausted and desensitized to the constant alarm bells.

And the other side of the battlefield? It’s evolving at a terrifying pace. Threat actors are no longer just lone hackers in basements. They are sophisticated, well-funded syndicates, and increasingly, they have their own AI toolsets. As Pete Burke, a federal field CISO at CDW Government, points out, attackers are now using AI to scale their operations, automate their methods, and mask their activities in ways that were previously unimaginable. The old patterns, the human schedules, and tell-tale signs of a manual attack are disappearing.

This creates a dangerous asymmetry. A double-edged sword. While defenders struggle with manual processes, attackers are leveraging automation to execute assaults with terrifying speed and precision. The industry needed more than just a faster version of the old way. It needed a revolution.

Enter the AI Ally: Google’s Vision for Agentic Security

Google’s answer isn’t another box on the diagram. It’s a partner in the trenches. This is the essence of their new vision for agentic security. Powered by Gemini, Google’s flagship AI model, this new ally is deeply integrated into the Google SecOps platform, designed to function as an extension of the human security team.

So what does this AI partner actually do?

Imagine an analyst, we’ll call her Jane. A critical alert comes in. Instead of spending the next four hours pulling logs from ten different systems, Jane simply asks the AI ally in natural language: “Show me all activity from this IP address in the last 24 hours and summarize any unusual behavior.”

The AI doesn’t just dump raw data. It analyzes, correlates, and provides a concise summary, highlighting the most critical events. It might say, “This IP, originating from a known malicious network, accessed the production database, attempted to escalate privileges, and exfiltrated a small data packet. This behavior matches the pattern of the threat group ‘Crimson Shadow.'”

But it doesn’t stop there. The AI ally then provides guided response options. It might recommend quarantining the affected server, blocking the IP at the firewall, and automatically generating a ticket for the IT team with a single click for Jane to approve. This is the core of the new workflow: a seamless collaboration between human expertise and AI-driven scale and speed. It’s about reducing the manual, repetitive toil so that human analysts can focus on the bigger picturestrategy, threat hunting, and making the critical decisions that machines can’t.

Under the Hood: The Technology Powering the New Defense

This ambitious vision is supported by a suite of new and enhanced technologies, all working in concert within the Google Cloud ecosystem. At the center of it all is the Security Command Center (SCC), which acts as the central nervous system for this new defensive posture.

AI Protection and Model Armor

A key pillar of this new strategy is AI Protection, a solution dedicated to mitigating risks across the entire AI lifecycle. In today’s world, it’s not enough to use AI for defense; you have to defend the AI itself. AI Protection provides a multifaceted approach to managing this new risk landscape.

A critical component of this is Model Armor. Think of it as a bodyguard for an organization’s own AI models. It actively screens the prompts being fed into an AI and the responses coming out, guarding against a new class of attacks like prompt injection, jailbreaks, and sensitive data leakage. The goal is to ensure that the AI systems a company relies on can’t be tricked or manipulated by attackers.

The value is already being seen in the real world. Jay DePaul, the chief cybersecurity and technology risk officer at Dun & Bradstreet, praised the system, stating, “We are using Model Armor not only because it provides robust protection… but because we’re getting a unified security posture from Security Command Center”. This allows his teams to quickly identify and respond to vulnerabilities without slowing down development.

Agentic IAM: Giving AI an Identity

One of the more forward-looking announcements is Agentic IAM. As companies deploy more autonomous AI agents to perform tasks, a critical question arises: Who watches the watchers? Agentic IAM is Google’s answer. It’s a new identity and access management framework specifically for AI agents.

This system will allow organizations to provision unique identities for their agents, set granular policies for what they can and cannot do, and maintain end-to-end observability of their actions across cloud environments. It’s a crucial step toward ensuring that as AI becomes more powerful and autonomous, it remains secure and under human control.

Gemini: The Brains of the Operation

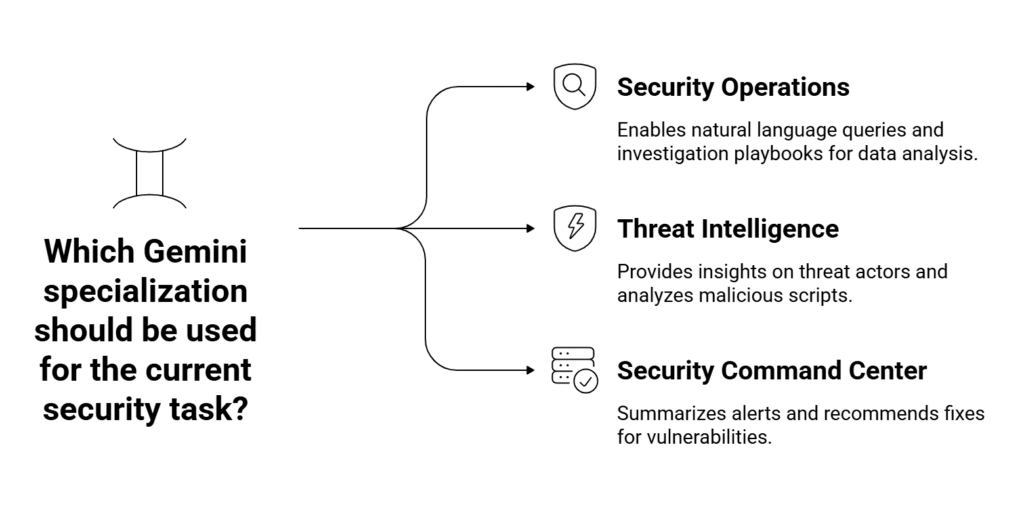

The intelligence driving this entire system is Gemini, Google’s most advanced AI. But it’s not a single, monolithic AI. Instead, Gemini specializes in different security tasks:

- Gemini in Security Operations: This is the conversational interface, the part that allows analysts to query data in natural language, build investigation playbooks, and get instant insights from mountains of data.

- Gemini in Threat Intelligence: This version taps directly into the frontline expertise of Mandiant, Google’s elite threat intelligence unit. It can provide instant summaries on threat actors, their tactics, and their motivations. It even includes a feature called Code Insight, which can analyze potentially malicious scripts and explain their behavior in plain English, removing the need for time-consuming reverse engineering.

- Gemini in Security Command Center: Here, Gemini’s job is to cut through the noise. It automatically summarizes high-priority alerts for misconfigurations and vulnerabilities, highlighting the potential impact and recommending how to fix the problem before it can be exploited by an attacker.

A Foundation of Continuous Improvement

Beyond these headline features, Google also announced a suite of updates to the underlying platform. A new Compliance Manager helps automate the complex work of meeting regulatory requirements, while a Data Security Posture Management service provides governance for sensitive data. New Risk Reports, powered by a virtual red team, can even simulate attacks to pinpoint gaps in a company’s defenses before a real adversary finds them.

A Proactive Stance: Securing AI from the Ground Up

Perhaps the most mature aspect of Google’s strategy is its dual focus. It’s not just about using AI to secure the enterprise; it’s about securing the use of AI itself. This holistic view is embodied in Google’s Secure AI Framework (SAIF), a comprehensive guide for building, deploying, and managing AI systems safely.

The framework acknowledges that AI introduces new and unique risks. The data used to train models could be poisoned, the models themselves could be stolen, or they could be manipulated to produce harmful outputs. SAIF provides a structured taxonomy of these risks and recommends concrete mitigations.

The new capabilities announced at the Security Summit directly support this framework. The expanded AI agent inventory feature, for example, helps organizations automatically discover where and how AI is being used in their environment, a critical first step for effective risk management. This is paired with services from Mandiant Consulting, which can help organizations assess the security of their AI pipelines and even conduct red-teaming exercises to test their AI defenses against simulated attacks. It’s a combination of powerful technology and deep human expertise.

What This Means for the Future of Cybersecurity

So what’s the bottom line? This is more than just a product launch; it’s a glimpse into the future of the industry. A future built on three core pillars.

First, the democratization of expertise. For too long, top-tier security analysis has been the domain of a select few experts. AI allies like this can act as a force multiplier, upskilling junior analysts and empowering non-experts to investigate threats with confidence. The AI provides the context and guidance, allowing a much broader range of people to contribute effectively to an organization’s defense.

Second, the definitive shift from reactive to proactive. The old model of cybersecurity was to wait for an alarm and then scramble to respond. The new model is about continuously hunting for threats and hardening defenses. Tools like the AI-powered Risk Reports allow teams to find and fix their weaknesses before they become tomorrow’s breach headline.

And finally, this solidifies the new paradigm of human-machine teaming. The future of security isn’t about AI replacing humans. It’s about augmentation. The AI ally handles the crushing scale and inhuman speed of modern cyber warfare, processing trillions of signals in real-time. This frees up human experts to do what they do best: think critically, understand context, and make strategic decisions.

The road ahead for cybersecurity is still challenging. The threats will continue to evolve. But with the introduction of a true AI ally, defenders now have a powerful new partner in the fight, one that can help level the playing field and, just maybe, give the heroes a fighting chance to win.